Anyone who has read the book „Thinking Fast and Slow“ by Daniel Kahnemann knows that humans rarely make predictions by first principle thinking, but use mainly heuristics and Bayesian inference. Learning or comparison with humans is still the most important metric for machine learning performance. In this context, probabilistic modeling provides a framework for understanding what learning is and has therefore emerged as one of the most important theoretical and practical approaches for the development of machine learning. Using probabilistic programming, Bayesian optimization, data compression, and automatic model discovery, we describe in the following work how uncertainty in models and predictions can be represented and handled.

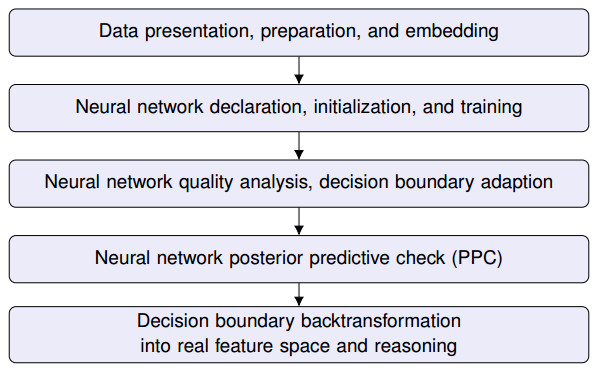

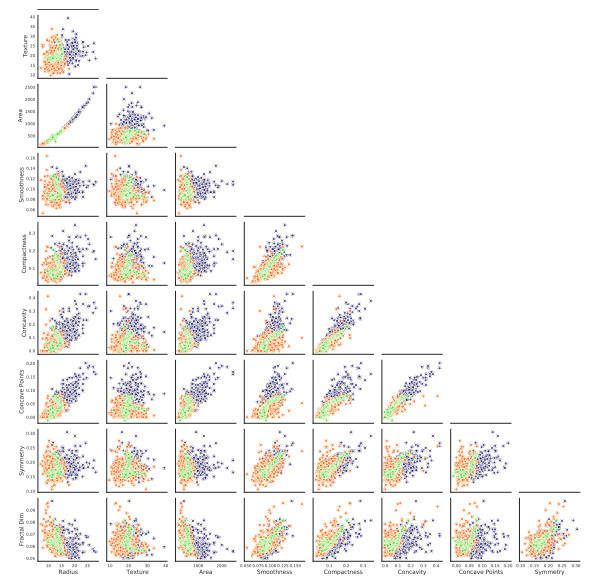

Originally, the model was developed using quality data from industrial production as a prediction of product quality or product. Of course, this data set could not be used openly in the paper. It is now all the more interesting that, using a public dataset as an example, we have implemented a probabilistic neural network for predicting the malignancy of breast cancer cells, whose features are used to formulate and train a model for a binary classification problem.

We, that is Dr. rer. Nat. Anastasia-Maria Leventi-Peetz (AI expert at BSI) and Dipl. Phys. Kai Weber. And now have fun reading.